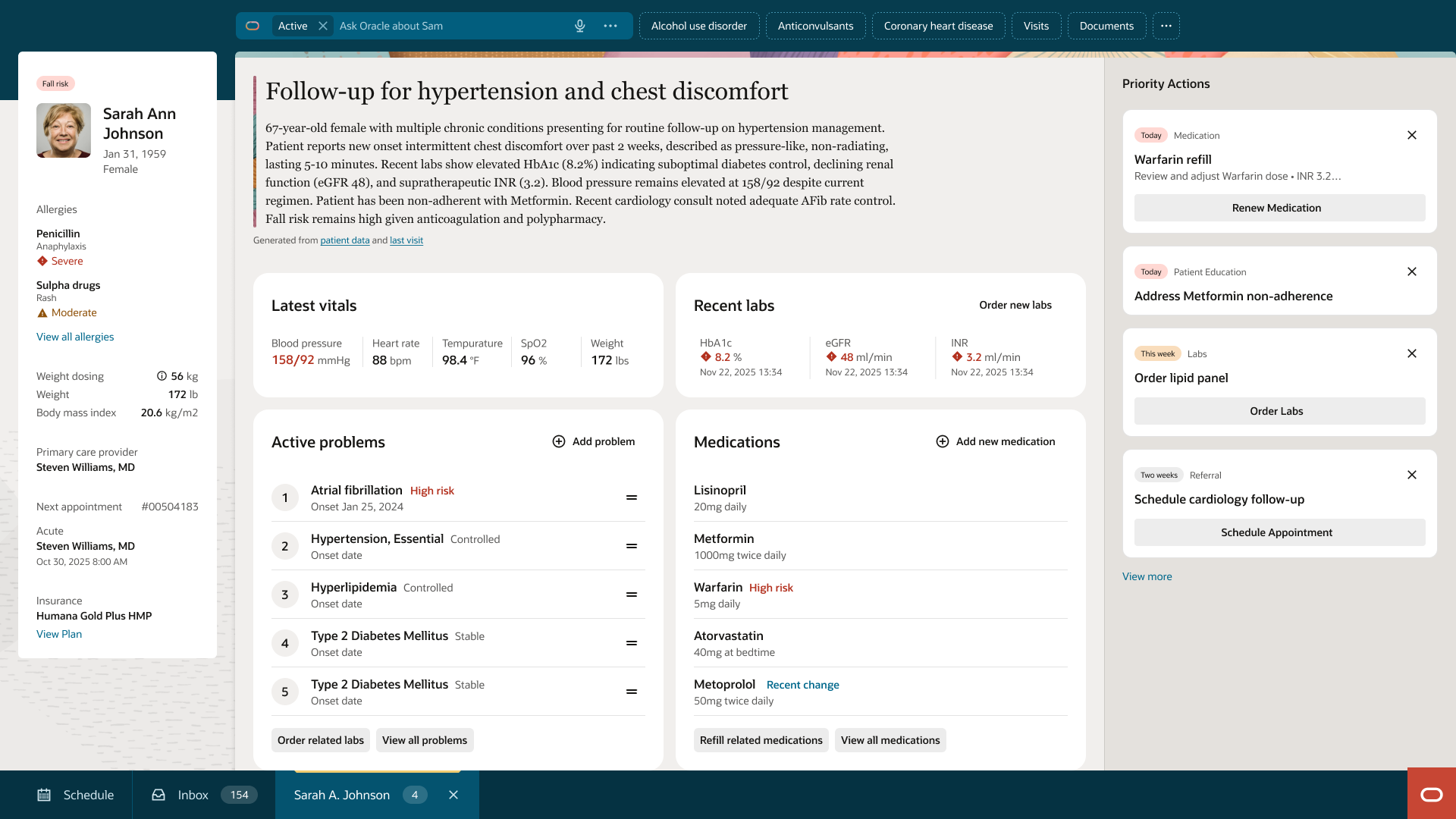

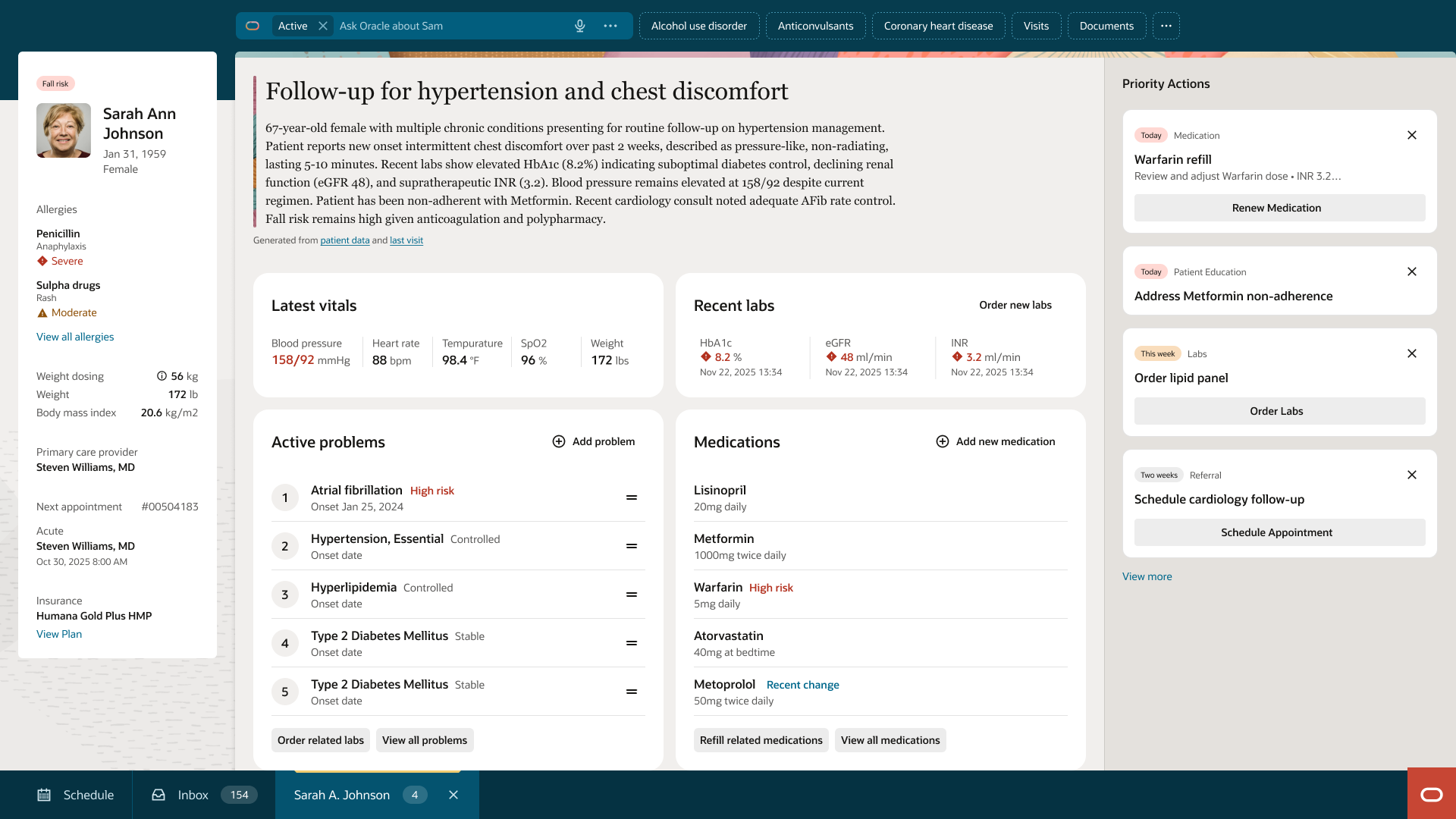

Establishing a shared data presentation language across the Oracle Health EHR — from patient overview to individual detail pages — across every clinical role and care venue.

The Oracle Health EHR had grown across years and acquisitions. Each product team made reasonable decisions in isolation. The result was an environment where the same patient data looked and behaved differently depending on which surface a clinician happened to be working in.

The Health Design System team's mandate was to solve that — to establish a shared data presentation framework that was clinically accurate, technically adoptable, and durable across a large, distributed product organization. As the principal UX designer embedded in the team, the work spanned direct physician collaboration, cross-team governance, and hands-on component specification.

A clinician who cannot instantly scan and interpret data is a clinician who pauses, double-checks, and sometimes misses what matters.

A structured audit across all ten product teams surfaced three compounding failures — each individually tolerable, together actively working against the people using the system.

Before any component work could begin, the team needed a foundational answer: what are the right ways to present clinical data, and when does each apply?

The taxonomy work ran in two parallel tracks — a bottom-up audit of existing patterns and a top-down physician collaboration process. The two fed each other iteratively. No form definition was stable until both tracks agreed.

| Form | When to use | What it communicates |

|---|---|---|

| List | Sequential data without comparative value; status scans; low-density contexts | Order, sequence, membership |

| Card | Discrete entities requiring action or navigation; patient-level summaries | Actionability, identity, priority grouping |

| Table | Multi-attribute data requiring comparison across rows; detailed records | Relationships between attributes, comparative value |

| Chart | Temporal trends or values requiring visual pattern recognition | Change over time, deviation from normal, magnitude |

Each data field was assigned a visibility tier — always visible, default visible, or on-demand — and every tier assignment required physician sign-off before it could be considered stable. The tier framework was then mapped across role and venue combinations to determine default hierarchy configurations for shared components.

Building the right components was necessary. Getting them used was the actual job.

The most contentious governance decision was defining the boundary between configurable and fixed. Every request for more configuration was evaluated against a single test: would this undermine cross-surface consistency for clinicians?

Teams were owners of their data and their copy. The design system team was the owner of the visual and behavioral contract.

There is a structural tension in design system work: the teams being asked to adopt shared components are the same teams that built the patterns being replaced.

Physician input did not refine the system at the margins. It changed it substantively.

A standing physician advisory group spanning multiple specialties and care settings was embedded into every phase of the work — not sequenced as a final validation step. No data tier assignment, no visualization form decision, and no behavioral specification was considered stable until reviewed by at least two physicians with relevant clinical context.

| Phase | Input sought | What changed |

|---|---|---|

| Discovery | Confirmation that observed inconsistencies created real workflow friction | Elevated scope from cosmetic to clinical-safety initiative |

| Data hierarchy | Tier assignment review for every data field in scope | 32% of proposed tier assignments were revised |

| Taxonomy | Validation of form-to-context mapping for clinical workflows | Chart form scoped more narrowly; card permitted in specific medication workflows |

| Component review | Evaluation of prototype components against real clinical tasks | Alert banner dismiss redesigned with role-based permissions |

| Anti-pattern library | Review of proposed anti-patterns for clinical accuracy | Three anti-patterns added based on physician-identified failure modes |

Physician advisors reviewed components against real clinical tasks — not design screenshots.

32% of tier assignments changed after physician review. Some of the most important were fields designers hadn't considered at all.

The most important outcome is not the components. It is the process by which components are now created and validated — a shared language, a governance model, and a physician validation process that can hold as the system grows.

Whether you're exploring new solutions or improving what you already have, I am here to help.

Get in touch